Applying advanced optimization methods like column generation can be rather difficult due to the complexity involved in implementing such an algorithm. The GCG (generic column generation) project, which is developed in cooperation of ZIB and our chair, is an attempt to make applying column generation easier. To make using GCG easier we have now added an interface for it into AMoR (see also my previous article). It allows to easily define blocks of constraints which shall be treated in a subproblem. In fact being able to quickly toy around with some models just helped us to discover a bug in GCG.

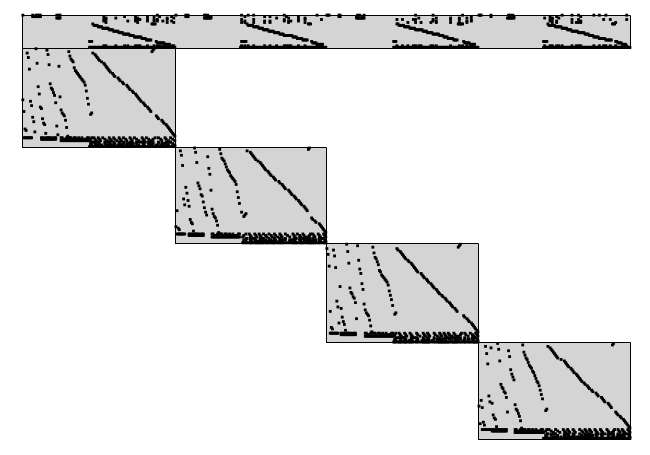

But before I go into more details let me first give you a small introduction into the nuts and bolts of column generation. I only consider the use in the context of Dantzig-Wolfe decomposition, i.e., when we have a constraint matrix that consists of some linking constraints (single border part) and a set of subproblems (block diagonal part):

The idea is to exploit, that the blocks of the block diagonal part are only linked via few constraints. If it weren't for those we could divide the whole problem into those smaller problems and solve them completely separate. Due to the linking constraints we need some clever way to coordinate the subproblems such that they find solutions that are feasible when put together. One way to do this coordination is - guess what - column generation. Very abstractly told this works as follows:

Solve the subproblems separately. For each solution we create a binary variable that encodes whether we choose this solution for this subproblem or not. With those variables we create a new binary program (the master problem) to find a subset of them that is feasible for the linking constraints and has one solution for each subproblem.

Each subproblem might have been solved optimally - but when combining the solutions those might not be optimal (or even feasible). As a result we need to search for other subproblem solutions that might improve the overall solution. This can be done by using the theory behind LP duality. The dual information encodes how much each constraints affects our objective. Taking this knowledge from the master problem we can go back to the subproblems and change their objective such that solutions are encouraged which will be less restrictive towards the most "expensive" constraints of the master problem.

This procedure is repeated until no subproblem solution is found that might potentially improve the master problem (again we can determine this using the dual information). If you want to know more about the workings involved, please feel free to look into the Primer in Column Generation.

Sadly just understanding Dantzig-Wolfe decomposition and column generation theoretically is far from sufficient when it comes to implementing ones own algorithm for it. Due to the enormous complexity involved in todays MIP solvers a lot of details need to be accounted for. For example presolving is absolutely crucial for an efficient solver, therefore one needs to know how to deal with the presolved instead of the orginal formulation (or how to get the original formulation back). If the master problem is not immediately integral, one needs to perform branching. But doing this correctly together with column generation can be a major headache. Furthermore there exist a number of techniques (e.g., stabilization) which are important to actually make column generation efficient. GCG is an attempt to build a system to provide those parts in a generic way. And to facilitate using GCG there is now a corresponding interface in AMoR.

The AMoR GCG interface

Lets dive into an example for how to use the AMoR GCG interface. A classical use case for Dantzig-Wolfe decomposition is the classical bin packing problem: We have a set of items with different sizes which shall be stored in the smallest amount of bins. The bins have a limited capacity and can not take more items than its capacity. The following snippet randomly generates a bin packing instance with 30 items and bins of size 20:

n_items = 30

bin_size = 20

items = (1..n_items).to_a

bins = (1..(n_items / 2)).to_a

item_sizes = Hash.new

items.each {|i| item_sizes[i] = Random.rand(bin_size/2)+1 }

The variables we use need to encode the following information: in which bin an item is stored and which bins are actually used (we want to use as few as possible). Therefore we take one binary variable per bin, encoding the usage of that bin and one variable per combination of item and bin, encoding whether we put that item into the respective bin:

for_all(bins) do |bin|

for_all(items) {|i| binary x("item #{i} in bin #{bin}")}

binary x("used #{bin}")

end

The objective is to use as few bins as possible:

min sum(bins) {|bin| x("used #{bin}")}

Now comes the interesting part - the constraints. For Dantzig-Wolfe decomposition we need to distinguish between the constraints that shall belong to a separate block and those that will be in the border. For bin packing a good way to define the blocks is to choose one for every bin. Then the linking constraints are those that touch multiple bins. We need to make sure that each item is packed into some bin which means we need one constraint for each item that is touching every bin:

for_all(items) {|i| sum(bins) {|bin| x("item #{i} in bin #{bin}")} == 1 }

For each bin in turn we need to make sure that the bins capacity is not overused. These constraints each deal with only one bin and can therefore be decomposed into different blocks as follows:

for_all(bins) do |bin|

block do

st sum(items) {|i| item_sizes[i] * x("item #{i} in bin #{bin}")} <=

bin_size * x("used #{bin}")

end

end

The block command starts a new block that contains all the constraints defined in it. Note that each block consists of exactly one constraint and will consist of solving a knapsack problem with varying values for the items (those values are determined by the master problem). The details for this will be handled by GCG.

Now that we have defined our model it is time to call the solver and print out the solution:

gcg

puts "Used #{objective} bins"

bins.each do |bin|

if x("used #{bin}") > 0

puts "Using bin #{bin}"

items.each do |i|

if x("item #{i} in bin #{bin}") > 0

puts " #{i}"

end

end

end

end

If we call scip instead of gcg we can compare the running times and results of the two different solvers to each other. Note that column generation is not a cure for all and it highly depends on the problem whether it can help or not. Also a generic tool like GCG might only help to indicate whether it can be of value to pursue further efforts into this direction. If it turns out, that column generation might be an effective method, one can next start to build a problem specific column generation algorithm - and potentially start a longer research journey along this route.

comments powered by Disqus